“QVAC SDK and Fabric give people and companies the ability to execute inference and fine-tune powerful models on their own terms, on their own hardware, with full control of their data.” Paolo Ardoino, CEO, Tether.

Institutional AI integration is growing. Enterprises at different levels are adopting intelligent applications to improve productivity. This includes general-purpose LLMs and wrappers used across software engineering, design, and marketing to automate repetitive tasks, conduct

in-depth research, and generate text and images.

However, to achieve distinctive goals, companies must fine-tune or retrain AI models with task-specific data. Small to Midsize companies spend extra to fine-tune or use a fine-tuned AI model. This, in addition to regular fees, constitutes the cost of applying machine intelligence and reduces the cost-efficiency of Artificial intelligence. The net throughput of AI is questionable: does the technology actually save cost, or simply shift

the cognitive load?

The highlight of the 7-year progression from the first build of OpenAI’s Generative Pre-Trained Transformer (GPT) is a saturated ecosystem of LLMs. The consequence is thousands of LLMs competing for GPU compute resources and space in data centers managed by centralized cloud services.

While acknowledging the safety risks of overreliance on centralized servers, economic constraints pose challenges even for well-funded teams.

Costing progressive intelligence for AI

Hyperparameter tuning may help achieve a degree of determinism, but it is hardly sufficient. The true cost of fine-tuning is spread across data management, compute resource procurement, and scaling for both.

The cost-cutting impact of PEFT methods like LoRa and QLoRA is remarkable, but a $4.50 GPU-hour charge for a multi-GPU cluster amplifies infrastructure costs, significantly exceeding team budgets and only becoming apparent during accounting.

The financial implications of running modified models are equally significant. Users often report that the extra cost of using fine-tuned models can reach double the cost of using the base models.

QVAC Fabric LLM is an attempt at creating cost efficient super models

Multi-GPU clusters, cloud services, and the dependency on enterprise-grade GPUs are the main cost drivers. Tether’s QVAC team believes that reducing reliance on each of these will significantly improve the cost efficiency of AI models.

Emerging from their ongoing research and development is QVAC Fabric. QVAC Fabric is high-throughput inference runtime that works on regular devices. QVAC Fabric LLM runs locally on consumer-grade device GPUs and is designed for AI models that prioritize high performance, cost-saving, and user privacy.

Early demonstrations of the QVAC Fabric LLM show an architectural shift and improvements in effectiveness for production-AI utilization, significantly reducing running costs for project teams.

The QVAC Fabric Design

Under the hood of QVAC Fabric is a cutting-edge resource-management design for scaling AI adoption at efficient costs.

QVAC Fabric LLM is derived from llama.cpp and integrates a complete LoRA fine-tuning workflow into a generalized modular framework. It is hardware-agnostic, enabling AI model training and inference generation across heterogeneous GPUs, including Desktop (NVIDIA, AMD, Intel) and Mobile (Mali, Adreno, Apple (A and M series chips)) devices. QVAC Fabric can also switch seamlessly between different API backends, such as Vulkan, CUDA, and ROCm, depending on the specific GPU in use.

The key architectural breakthrough of the QVAC Fabric is the implementation of the Dynamic Tiling Algorithm, which bypasses memory constraints and significantly reduces the overall computational overhead on mobile GPUs by segmenting large matrix operations.

QVAC Fabric’s edge-first approach readies AI adoption for billions of people who rely on consumer-grade devices. It scales superintelligence on limited-resource scenarios, saving users the recurring GPU rental costs. Only a one-time hardware investment is required for regular usage. However, hardware enhancement may be required for advanced usage, depending on the scale.

Enter QVAC SDK, the interface to QVAC Fabric

QVAC SDK is a unified, easy-to-use software development kit for building applications that can run on any consumer device and operating system. With the QVAC SDK, anyone can now use pre-built modules to integrate and fine-tune any open-source model, leveraging QVAC Fabric as the building block and unleashing unprecedented utility from consumer hardware. Within its first release, QVAC SDK will support LLMs, text-to speech, OCR, RAG, transcription, translation, text embeddings, delegated inference, and several other features, while planning an aggressive roadmap to support even a far wider set of utilities in the future.

Putting the model to use, Tether is developing a range of products built with QVAC SDK and Fabric, with two already released: QVAC Workbench and QVAC Health. Furthering their push to create utilities with QVAC Fabric and SDK, the team also intends to issue several grants and grow strategic collaborations with global and local partners.

QVAC Workbench

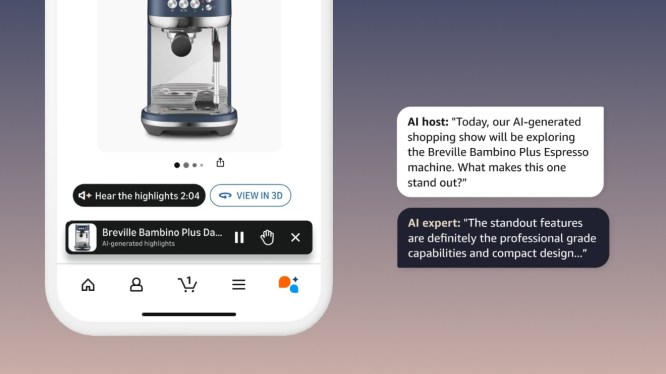

QVAC Workbench is QVAC SDK and Fabric in motion. Workbench is Tether’s general-purpose AI-powered application that generates prompt-based inferences. It is a local-first, customizable AI assistant. QVAC Workbench is a truly private AI work buddy and part of a set of AI products built on the QVAC technology.

QVAC Workbench handles scheduling, writing, coding, and research tasks. It generates inferences using the GPU resources of the devices on which it is installed. QVAC Workbench can be used in vast sectors, including financial investing. It is optimized for mobile and desktop devices.

Workbench runs on a completely peer-to-peer protocol powered by Pear, a P2P runtime built with the Holepunch stack. The Pears integration enables delegated inference on QVAC Workbench, allowing users to seamlessly and securely switch tasks between devices based on (device) capacity or convenience. For instance, you can initiate a task on your Android/iOS phone and delegate the heavy-lifting to your Desktop workstation at home.

QVAC Workbench was first released in October 2025 alongside QVAC Genesis I – the world’s largest library of synthetic datasets for training STEM-focused AI models, covering 19 educational fields. While early builds of the QVAC Workbench application show promise, Tether is planning significant investments in terms of internal resources, investments, and public grants to evolve and scale the extremely ambitious vision of QVAC’s open source ecosystem.

QVAC Health

QVAC Health is a personalized AI health assistant that provides a better understanding of routine body upkeep through user-provided data. QVAC Health is a win for privacy and cost savings. It securely stores user data on their devices and creates a trustworthy environment where users can share their most detailed data. Using shared data and a closed-loop intelligence, it grows linearly intelligent and tailors recommendations to each user.

Trust, built through privacy, enables users to rely on AI for routine well-being assistance. Features include logging meals and workouts, scheduling medications, and scanning lab reports via OCR to log biomarkers and other health and fitness-related metrics

Fabric LLM Is Unique: evolving BitNet 1-bit architecture to scale inference and LoRA fine-tuning on heterogeneous consumer GPUs

On March 17th, 2026, Tether’s AI Research announced a major breakthrough that reshapes how modern AI models can be deployed: the world’s first LoRA (Low-Rank Adaptation) fine-tuning framework for Microsoft’s BitNet, designed to run seamlessly across many different types of GPU hardware, operating systems, and even mobile edge devices such as smartphones.

This development removes the long-standing dependency on enterprise-grade infrastructure, which has limited large language model fine-tuning to multi-GPU servers running NVIDIA hardware and the CUDA ecosystem. By enabling cross-platform execution, the framework makes state-of-the-art (SOTA) AI models accessible to a much broader community, including students, researchers, developers, and even general consumers who previously lacked access to enterprise-grade infrastructure.

BitNet introduces an ultra-efficient 1-bit architecture that compresses model weights to the ternary range −1, 0, and 1, dramatically reducing memory usage and computational overhead compared to full-precision models. When combined with LoRA (Low-Rank Adaptation), the overall memory and compute requirements are further reduced. The framework replaces CUDA dependencies with Vulkan support, unlocking compatibility with AMD, Intel, Apple Devices, and mobile GPUs for both training and inference.

Tether’s research lays the groundwork for Bitnet to perform both inference and fine-tuning on more consumer GPUs. Their novel framework successfully fine-tuned the 13-billion-parameter model on an iPhone 16, demonstrating that workloads once reserved for data centers can now run on consumer hardware. This shift reduces costs, increases privacy, minimizes vendor lock-in, and establishes high-performance AI as a truly cross-platform capability rather than one confined to server farms.

Beyond finance, Tether continues to make bold statements in AI and communication technologies. QVAC Fabric, its SDK, and the Bitnet LLM fine-tuning framework are only a step toward achieving equitable superintelligence for billions of humans and AI agents, as the firm has earmarked substantial resources for furthering developments in open-sourced and edge-first AI solutions.