Adobe on Thursday launched the latest iteration of its Firefly family of image generation AI models, a model for generating vectors, and a redesigned web app that houses all its AI models, plus some from its competitors. There’s also a mobile app for Firefly in the works.

The new Firefly Image Model 4, Adobe says, improves on its predecessors in terms of quality, speed, and the amount of control over the structure and style of outputs, camera angles, and zoom. It can generate images in resolutions up to 2K. There’s also a tweaked, more capable version of this model called Image Model 4 Ultra that can render complex scenes consisting of small structures and lots of detail.

Alexandru Costin, VP of Generative AI at Adobe, said that the company trained the models with a higher order of compute magnitude to enable them to generate more detailed images. He added that, as compared to previous generation models, the new models improve text generation in images and have features that let users use images of their choice to get the model to generate pictures in that style.

The company is also making its Firefly video model, which launched in limited beta last year, available to everyone. It lets users generate video clips from a text prompt or image, use camera angles, specify start and end frames to control shots, generate atmospheric elements, and customize motion design elements. The model can generate video clips from text at resolutions up to 1080p.

Meanwhile, the Firefly Vector Model can create editable vector-based artwork, iterate and generate variations of logos, product packaging, icons, scenes, and patterns.

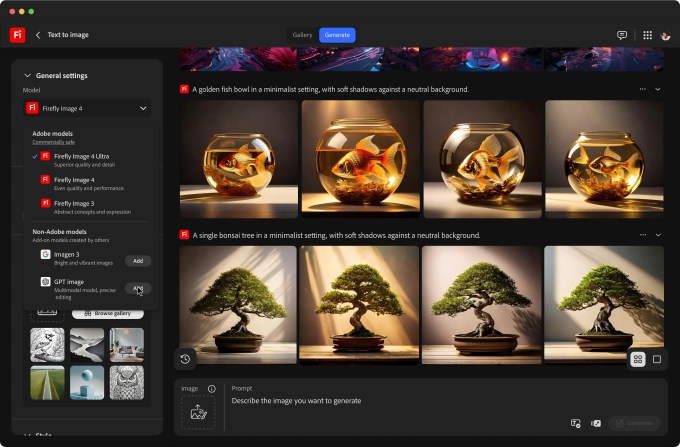

Notably, Firefly’s web app gives you access to all these models as well as a few image and video generation models from OpenAI (GPT image generation), Google (Imagen 3 and Veo 2), and Flux (Flux 1.1 Pro). Users can switch between any of these models at any point, and images generated from any model will have content credentials attached to them. The company hinted that other AI models may be added to the web app in the future.

Adobe is also publicly testing a new product called Firefly Boards, a canvas for ideation or moodboarding. It lets users generate or import images, remix them, and collaborate with others — similar to what you can do with other AI-based ideaboards from Visual Electric, Cove, or Kosmik. Boards is available through the Firefly web app.

Disrupt 2026: The tech ecosystem, all in one room

Your next round. Your next hire. Your next breakout opportunity. Find it at TechCrunch Disrupt 2026, where 10,000+ founders, investors, and tech leaders gather for three days of 250+ tactical sessions, powerful introductions, and market-defining innovation. Register now to save up to $400.

Save up to $300 or 30% to TechCrunch Founder Summit

1,000+ founders and investors come together at TechCrunch Founder Summit 2026 for a full day focused on growth, execution, and real-world scaling. Learn from founders and investors who have shaped the industry. Connect with peers navigating similar growth stages. Walk away with tactics you can apply immediately

Offer ends March 13.

The company said these new models would soon be integrated into its product portfolio, but didn’t give a timeline for the rollout.

Adobe is also making its Text-to-Image API and Avatar API generally available, and said a new Text-to-Video API is now available for use in beta. These APIs are available through the company’s Firefly Services collection of APIs, tools, and services.

Adobe is also testing a web app called Adobe Content Authenticity to let users attach credentials to their work to indicate ownership and attribution through metadata. Users can also indicate whether AI companies can use any images for AI model training.